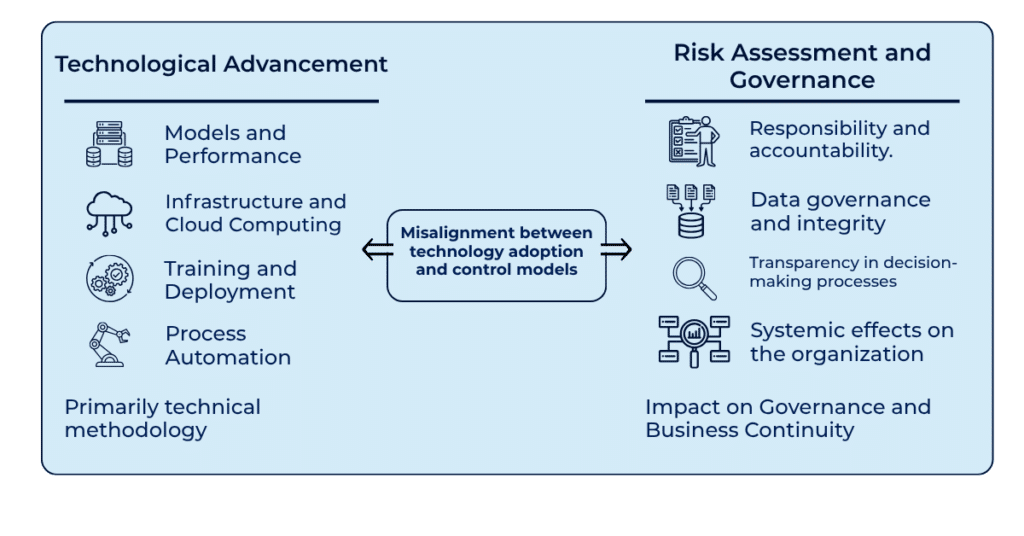

Artificial intelligence is rapidly entering business processes, influencing operational decisions, customer interactions, and business models. However, the discussion often tends to focus on technological aspects, while the real change lies in governance. The adoption of AI systems introduces new issues related to accountability, data control, decision transparency, and risk management. More than just a technological tool, AI therefore represents a governance and risk management challenge for the entire organization.

Artificial intelligence is radically changing the way companies operate. It is not just a new application, but a new way of thinking about decision-making processes, customer interaction, and the evolution of business models themselves. However, while the industry celebrates the promises of automation and algorithmic efficiency, there is a subtle and dangerous flaw in the prevailing narrative. The discussion often focuses exclusively on technological aspects: which models are faster, which cloud stack to use, how to optimize training. For those responsible for ensuring security and operational continuity, it is crucial to recognize that this perspective is, at best, incomplete.

The introduction of AI into business operations brings a level of complexity that goes far beyond the simple management of servers or legacy applications. It raises new and challenging questions about legal responsibility, deep data control, and transparency in decision-making processes. For enterprise security, AI is not just another tool to integrate; it is a systemic risk vector that requires a restructuring of the defense model.

When we talk about cybersecurity, our battlefield is usually defined by the network perimeter, infrastructures (on-premise, cloud, SaaS), endpoints, and user identities. When secure data management is introduced into the equation, the boundaries become more blurred—and with AI, the perimeter becomes completely fluid. If we consider AI as just “another software to purchase” (everyone is using it, so why shouldn’t we?), we expose ourselves to risks for which we may lack both the expertise and the tools required for effective management.

The fundamental issue is that the adoption of AI systems shifts part of the risk from the operational layer (infrastructure, deterministic) to the decision-making layer (analytical or generative models, therefore non-deterministic). In a traditional context, if malware infects a server, we can isolate the system. In an AI environment, if a model is compromised or if the training data is poisoned or biased, the entire decision-making process of the organization can be manipulated. We are no longer just protecting static data; we are protecting the integrity of the algorithm itself.

This leads to a governance issue that is often overlooked in technical discussions.

If AI makes decisions that have operational impact, where does legal responsibility lie when those decisions are wrong?

Governance must define who is accountable when an algorithm unintentionally discriminates, violating regulations such as GDPR or the European AI Act. Technology alone cannot ensure that these responsibilities are met: a strong human and procedural framework is required.

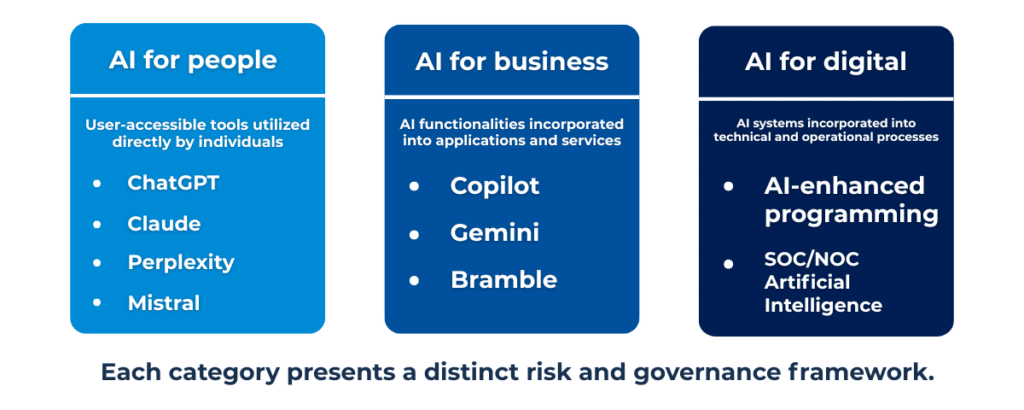

Without overstating the issue, it is important to define the areas or use cases where AI adoption becomes relevant. Taking inspiration from Gartner’s AI TRiSM governance model, we can simplify it into practical categories that can be assessed using consistent criteria.

The first simplified category is non-specialized generative AI: large commercial and open-source LLMs, which we can define as “AI for people,” such as ChatGPT, Perplexity, Mistral, LLaMA, etc. Anyone can access and use these tools for a wide range of purposes, including the analysis, processing, transformation, and synthesis of any data that, in a business context, is likely owned by the organization. This category of AI tools presents specific risks and challenges, requiring its own governance paradigm and dedicated risk analysis.

The second simplified category is specialized generative AI embedded within systems and applications that an organization has often already adopted and actively uses. We can define these as “AI for business,” with well-known examples such as Copilot, Gemini for Work, Rovo, etc. While it is true that users cannot typically access these functionalities freely (making Shadow AI less likely), it is equally evident that an organization may suddenly find itself with an “AI-powered feature” enabled—something it never explicitly intended to adopt—as part of an existing service or application. This category presents completely different risks, because while the system or service itself is usually governed, the specific AI functionality—bringing its own vulnerabilities and risks—is not.

The third and final category is “AI for digital,” which includes highly specialized tools dedicated to the automation, optimization, management, and monitoring of complex digital environments. This includes AI-assisted coding platforms, agentic AI, and AI-enhanced SOC and NOC systems such as GitHub Copilot, DeepCode AI, Radiant, etc. In this highly technical domain, the common denominator is the depth and pervasiveness with which these systems can operate. As a result, keeping humans in the loop becomes (almost) a matter of survival.

Just a month ago, AWS experienced significant service disruptions caused by autonomous AI agents—according to AWS itself—due to insufficient limitations on access and decision-making freedom, classifying the incident as “human error.” It is interesting how the principle “who makes the mistake pays for it” still applies even when a machine is involved: the machine executes, but accountability remains exclusively human.

It is easy to guess whose phone will ring when vulnerable code generated by a coding agent—validated and tested by another AI-based platform—goes into production with predictable consequences.

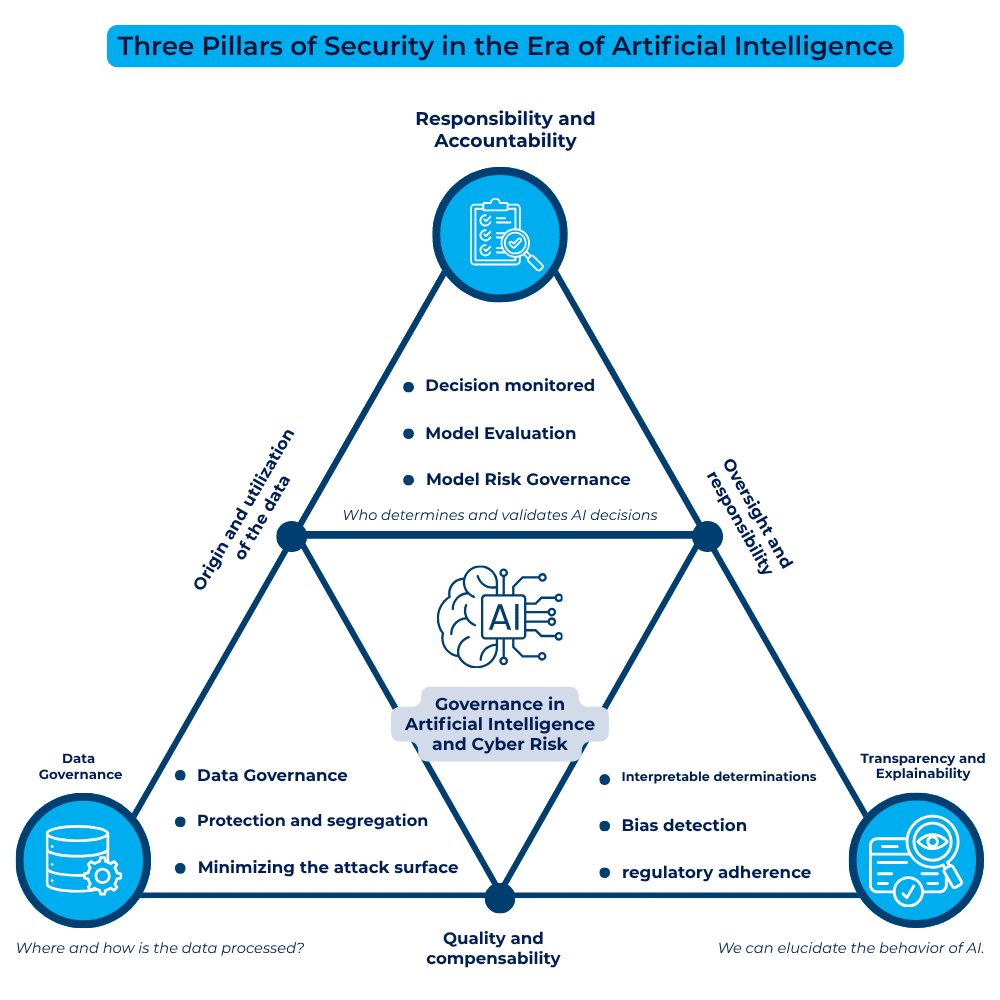

In light of these scenarios, it is clear that security strategy cannot remain unchanged. We must focus on three key areas where AI governance directly intersects with cybersecurity.

The first area is responsibility and accountability. In a world of advanced automation, full traceability of decisions is essential. A CISO cannot delegate responsibility for automated decisions to a black box. We must ensure the ability to audit the decision-making process: what data was used, which parameters the model applied, and who supervised the final action. This requires integrating Model Risk Management into traditional enterprise Risk Management.

The second area is data control. AI depends on data, but security depends on its origin and integrity. If a model interacts with critical data without adequate safeguards, the risk of data leakage or model inversion attacks increases exponentially. AI governance must enforce strict rules on where data is processed, ensuring that the boundary between public cloud and private infrastructure is respected—not only for compliance reasons, but also to limit the attack surface.

Finally, transparency. The concept of a “black box” is unacceptable from both a security and trust perspective. If we cannot explain why AI denied a service or approved a transaction, we cannot guarantee the transparency required by regulations. This implies adopting Explainable AI techniques not as an option, but as a security requirement—enabling the identification of bias or manipulation even after deployment.

It is important to understand that, more than a technical issue, AI adoption represents a governance challenge for the entire organization. There is often an expectation that IT will manage these new dynamics alone, but true risk management is deeply tied to human behavior and corporate culture. The role of policymakers and executives is to transform AI from a mere technology into a governed asset, managed with integrity.

The speed of innovation must be balanced with the ability to govern associated risks. This is not about slowing down adoption, but ensuring that every new use case undergoes a risk assessment that includes algorithm security, input data security, and output process security.

In essence, AI is a responsibility that must be managed with the same level of caution applied to operational continuity or the protection of financial and personal data.

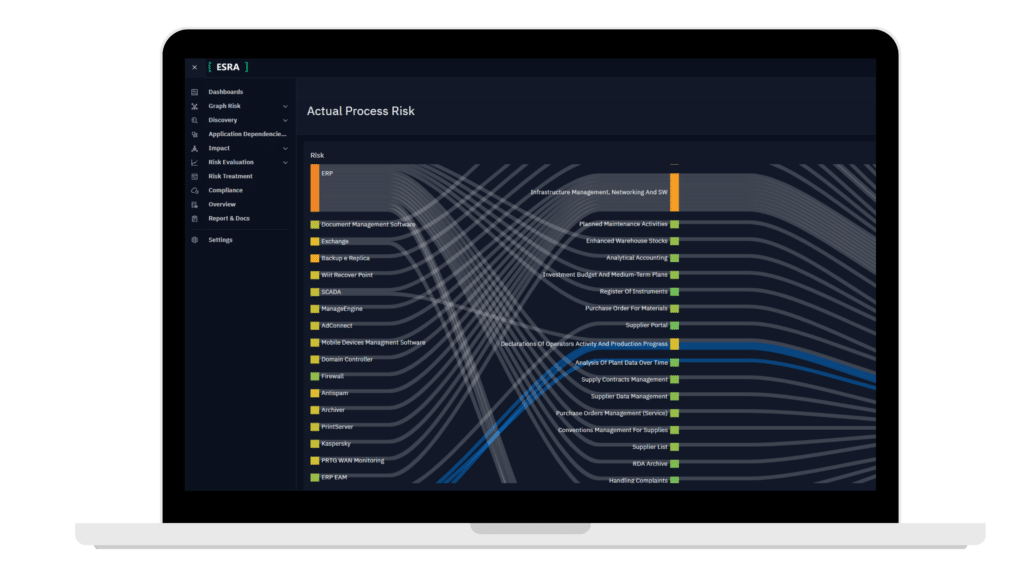

For security leaders, it is essential to start mapping existing use cases not by function, but by their potential risk. Every AI model in production should be treated as a complex information system, included within the risk management model and Business Impact Analysis (BIA), with a clear mapping of its contribution to business processes.

Generative AI therefore requires continuous monitoring, dedicated security testing enabled by specialized tools, and a vendor assessment that goes beyond simple SLAs.

More broadly, in risk management, the adoption of new technologies inevitably introduces new vulnerabilities, which in turn require increasingly advanced tools.

In the case of AI, the impact on risk is particularly evident. Its use—especially when involving autonomous agents—introduces elements of absolute unpredictability into process behavior. The rise of AI agents is making traditional risk management approaches increasingly ineffective. Today, we have machines that take initiative, generate code, introduce new processes, and progressively reduce the role of human intervention in decision-making.

More intelligent and autonomous machines require equally intelligent risk management systems—capable of responding with the same level of autonomy and speed.

AI adoption introduces a discontinuity in how risk is distributed within organizations, extending across processes and operational tools without full visibility into where and how it operates.

ai.esra continuously builds and updates the organization’s digital twin, making visible the applications actually in use and identifying AI components embedded within processes. This ensures that risk analysis remains aligned with operational reality, preventing the gap between what is governed and what is evolving in practice.

The goal is to make risk interpretable and manageable even as systems become more autonomous and their decisions increasingly unpredictable. From this point forward, the differentiator will be the ability to govern this complexity effectively and continuously.

ai.esra SpA – strada del Lionetto 6 Torino, Italy, 10146

Tel +39 011 234 4611

CAP. SOC. € 50.000,00 i.v. – REA TO1339590 CF e PI 13107650015

“This website is committed to ensuring digital accessibility in accordance with European regulations (EAA). To report accessibility issues, please write to: ai.esra@ai-esra.com”

ai.esra SpA – strada del Lionetto 6 Torino, Italy, 10146

Tel +39 011 234 4611

CAP. SOC. € 50.000,00 i.v. – REA TO1339590

CF e PI 13107650015

© 2024 Esra – All Rights Reserved